Top 5 AI Server Cooling Methods Ranked (2026): A Decision Guide for High-Density Computing

In the AI arms race, the hardware limits of 2026 are no longer dictated by processing architecture; they are governed strictly by thermodynamics. When a modern GPU cluster executes a complex Large Language Model (LLM) training parameter, the hardware demands continuous, 100% utilization. If your cooling infrastructure fails to instantly extract that localized heat, the silicon automatically throttles. The result? You pay for 100% of the compute capacity but yield only 70%.

For data center architects and procurement directors, selecting an AI thermal management method is a strategic choice that dictates absolute compute density and maximum hardware ROI. To guide your architectural decisions, we have evaluated the top five thermal management methods of 2026. Ranked from the lowest density baseline (#5) to the absolute thermodynamic limit (#1), here is the definitive guide to matching your cooling infrastructure with your compute density goals.

#5. Traditional Air Cooling

The Failing Baseline of the AI Era

For two decades, blasting chilled air through server racks was sufficient. In 2026, relying on this method for high-density AI computing is an engineering misstep. Air lacks the thermal mass to absorb concentrated GPU heat fluxes, making it physically incapable of sustaining modern machine learning workloads.

● Best Use Case: Low-power AI inference at the edge, legacy enterprise storage, and basic web hosting where rack densities remain strictly below 15kW.

● Performance Advantage: Air cooling is simple, universally understood, and supported by every legacy data center on the planet. It requires no complex plumbing, no fluid manifolds, and makes hardware maintenance as simple as pulling a server from a dry rack.

● Limitations: The physics of air completely break down past 20kW. To attempt cooling a dense AI rack with air, facilities must run fans at hurricane speeds, creating deafening acoustic environments (>85dB). More critically, air cooling fails to penetrate the deep heat sinks of dense GPUs, inevitably triggering thermal throttling and crippling your AI compute output.

● Cost Logic: Offers the lowest initial Capital Expenditure (CapEx), but incurs the highest long-term Operating Expenditure (OpEx). The massive electricity wasted on mechanical chillers and high-RPM server fans, combined with the financial loss of stranded compute cycles, makes air cooling economically unviable for AI.

● Decision Verdict: Strictly avoid for AI training clusters. Confine air cooling to legacy IT workloads or light, distributed inference nodes.

Decision Trigger: If your rack density exceeds 20kW, relying on this method is no longer an engineering choice; it is an active sabotage of your hardware investment.

#4. Hybrid Cooling (Air + Liquid / RDHx)

The Pragmatic Transitional Architecture

Hyperscalers can build greenfield liquid data centers from scratch, but enterprise operators often must integrate AI hardware into existing, air-chilled facilities. Hybrid cooling bridges this massive infrastructural gap, allowing high-density deployments in brownfield sites.

● Best Use Case: Enterprise data centers and colocation facilities that need to deploy 20kW to 45kW AI racks within a legacy building originally designed for 10kW air-cooled loads.

● Performance Advantage: Hybrid systems capture the majority of the brutal AI heat load (70%-80%) via direct-to-chip liquid loops on the GPUs, while allowing the facility's existing Computer Room Air Conditioning (CRAC) units to handle the rest. Alternatively, facilities utilize Rear Door Heat Exchangers (RDHx)—massive liquid-filled radiator doors attached to the rack exhaust. RDHx neutralizes the hot air before it re-enters the room, preventing the AI servers from overwhelming the facility and protecting adjacent legacy IT equipment.

● Limitations: Hybrid cooling is a compromise. It forces the facility to maintain dual infrastructures: complex liquid loops alongside highly inefficient mechanical air blowers. It also caps out around 45kW, rendering it insufficient for the most advanced next-gen GPU architectures.

● Cost Logic: Moderate CapEx. The primary financial advantage of hybrid cooling is cost avoidance. It allows you to participate in high-density AI training without the multi-million dollar capital penalty of tearing down and rebuilding your entire data center.

● Decision Verdict: Choose this method if you are transitioning a legacy facility into the AI era. It is the most pragmatic way to balance standard IT workloads with modern high-density compute demands under one roof.

Decision Trigger: If you are injecting high-density AI hardware into a facility built before 2022, this method is the only viable path to avoid catastrophic facility redesign costs.

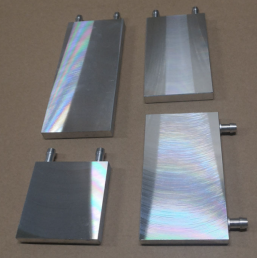

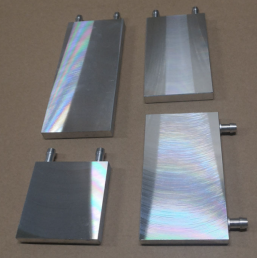

#3. Cold Plate Liquid Cooling (CPLC)

The 2026 Industry Standard for AI GPU Clusters

As AI racks scale aggressively from 40kW up to 120kW (driven by architectures like the NVIDIA Blackwell platform), Cold Plate Liquid Cooling has cemented itself as the definitive industry standard for maximizing hardware ROI without over-engineering the facility.

● Best Use Case: Greenfield data centers and advanced retrofits deploying high-density NVIDIA, AMD, or custom AI training clusters where sustained, unthrottled compute is a financial mandate.

● Performance Advantage: This architecture mounts precision-engineered, vacuum-brazed metal blocks directly onto the GPUs and CPUs. Coolant flows through internal microchannels, instantly absorbing extreme, localized thermal spikes. This targeted extraction improves overall heat exchange efficiency by 50% over air, guaranteeing junction temperatures remain well below throttling thresholds. Your hardware maintains its maximum boost clock indefinitely.

● Limitations: Cold plates are component-specific. They do not capture 100% of the server's heat, meaning secondary airflow is still required for memory and network switches. Furthermore, it demands strict, aerospace-grade leak prevention standards and helium-tested structural integrity from the manufacturer.

● Cost Logic: High CapEx for custom plates, manifolds, and Coolant Distribution Units (CDUs). However, the operational economics are overwhelmingly positive. By drastically lowering facility PUE and unlocking 100% of your stranded compute power, the ROI is achieved rapidly.

● Decision Verdict: CPLC is the default architectural choice for modern AI. To succeed, you must partner with a system-level integrator—such as Winshare Thermal—who can align vacuum-brazed cold plate manufacturing with advanced thermal-fluid simulations for your specific motherboard layout.

Decision Trigger: If you are deploying latest-generation GPU clusters targeting 40kW to 120kW, failing to implement this method guarantees stranded compute and severe financial loss.

#2. Direct Liquid Cooling (Direct-to-Die Contact)

The Next-Gen Silicon Safeguard

As semiconductor architectures pack more transistors into smaller nodes, the thermal bottleneck shifts from the cold plate to the Thermal Interface Material (TIM) sitting between the chip and the plate. Direct-to-Die cooling solves this microscopic roadblock.

● Best Use Case: Custom silicon, extreme overclocked multi-chip modules, and highly bespoke environments (80kW to 150kW+) where traditional heat spreaders cannot move heat fast enough to prevent junction degradation.

● Performance Advantage: While standard cold plates sit on top of a processor's integrated heat spreader, direct-to-die cooling eliminates the middleman. The dielectric fluid or specialized microchannel structure comes into direct physical contact with the naked silicon die. By removing the thermal resistance of the TIM entirely, this method extracts heat at the absolute maximum localized rate, preventing the most violent micro-hotspots.

● Limitations: This method requires extreme precision engineering and incredibly tight manufacturing tolerances. It is highly customized and requires deep, early-stage collaboration with the semiconductor manufacturer. Any leakage or structural failure at the die level is instantly catastrophic to the hardware.

● Cost Logic: Exceptionally high CapEx. Because these systems are bespoke to specific, high-end chip architectures, there are few economies of scale. You are paying for elite, ultra-low-tolerance thermal integration.

● Decision Verdict: Choose direct-to-die cooling only when standard vacuum-brazed cold plates hit physical conduction limits against the aggressive junction temperatures of your customized silicon.

Decision Trigger: If your semiconductor junction temperatures exceed safety limits despite standard cold plate application, direct-to-die liquid is your final performance safeguard.

#1. Immersion Cooling (Single & Two-Phase)

The Absolute Extreme of Density and Efficiency

When facilities push rack densities beyond 150kW to 250kW+, traditional boundaries between silicon and coolant must be erased entirely. Immersion cooling represents the absolute thermodynamic limit of heat extraction in 2026.

● Best Use Case: Specialized supercomputing sites, massive-scale LLM training hyperscalers, and greenfield deployments where maximizing compute per square foot is the singular priority.

● Performance Advantage: The entire server chassis—motherboard, GPUs, network cards, and power supplies—is submerged in a tank of non-conductive dielectric fluid. This architecture captures nearly 100% of the generated heat. It completely eliminates the need for server chassis fans, instantly stripping out massive amounts of acoustic noise and parasitic power draw. This results in the absolute lowest possible facility PUE, operating near a perfect 1.0.

● Limitations: The operational barrier to entry is massive. Routine server maintenance becomes a complex chemical procedure requiring hoists to lift servers out of tanks. Furthermore, standard data center floors cannot support the immense weight of fluid-filled immersion tanks without heavy structural reinforcement.

● Cost Logic: The highest initial CapEx. Tanks, expensive dielectric fluids (which require constant filtering), and structural reinforcements demand a massive upfront investment. The payoff only materializes over the long term through ultra-low OpEx and extreme compute density at scale.

● Decision Verdict: Reserve immersion cooling exclusively for boundary-pushing hyperscalers. For the vast majority of enterprise AI deployments, the operational friction heavily outweighs the thermodynamic benefits.

Decision Trigger: If your facility lacks reinforced flooring and your IT staff is not trained in dielectric fluid handling, this method will introduce unacceptable operational risks.

Summary Comparison: 2026 AI Thermal Architecture

Use this evaluation framework to align your facility's density goals with your thermal budget.

Ranking (Low to High Density) | Thermal Method | Max Density Support | System Impact & Performance | Initial Cost (CapEx) | Strategic Verdict |

#5 | Traditional Air Cooling | < 20kW | Triggers GPU throttling under heavy load. | Lowest | For Low-Power Inference Only |

#4 | Hybrid Cooling | 20kW – 45kW | Balances legacy air with targeted liquid. | Moderate | Best for Facility Retrofits |

#3 | Cold Plate Liquid (CPLC) | 40kW – 120kW | Eliminates throttling; max hardware ROI. | High | The 2026 Industry Standard |

#2 | Direct-to-Die Liquid | 80kW – 150kW+ | Maximum localized heat extraction. | Very High | For Bespoke/Next-Gen Silicon |

#1 | Immersion Cooling | 100kW – 250kW+ | Captures ~100% heat; requires heavy infra. | Highest | For Extreme Hyperscalers |

Securing Your Compute Infrastructure

Selecting the right cooling architecture is only the first step. Flawless execution requires a partner capable of true system-level integration. At Winshare Thermal Technology, we do not just manufacture vacuum-brazed cold plates; we engineer comprehensive thermal ecosystems. By aligning advanced thermal-fluid simulations with your specific AI workloads, we ensure your infrastructure scales to meet the demands of 2026 and beyond, without leaving a single cycle of compute performance stranded.

Are your current GPUs suffering from micro-throttling during peak training? Is your facility prepared for the transition to 120kW racks? Evaluate your infrastructure today, and contact our engineering team to design a targeted liquid cooling strategy that unlocks the full potential of your AI hardware.

English

English