In the rapidly evolving landscape of artificial intelligence, managing the heat generated by massive GPU clusters has fundamentally shifted from a secondary facility concern to a primary system-level constraint. The top AI thermal management challenges in 2026 center around managing extreme localized heat fluxes in 20kW to 50kW+ racks, where failure to extract heat fast enough directly results in severe compute throttling and massive financial losses. As machine learning models grow exponentially in complexity, the hardware tasked with training and running inference on these models is being pushed to its absolute physical limits. The days of simply lowering the ambient temperature of a server room are over. Today, engineers and procurement managers must understand that AI thermal management solutions are no longer just about keeping equipment from melting; they are about unlocking the full computational potential of billion-dollar hardware investments. Let us explore the structural changes in high-density computing cooling and how data centers must adapt to survive the thermal demands of 2026.

Table of Contents

1. Why Have AI Cooling Challenges Evolved from "Cooling" to "Compute Constraint"?

2. How Do Uneven Heat Distributions Impact AI Server Stability?

3. Why is Traditional Air Cooling Reaching its Efficiency Limit in 2026?

4. How Do Liquid Cooling Systems for AI Servers Recover Stranded Compute Power?

5. What Makes a Data Center Thermal Management System Truly "AI-Ready"?

6. Is Your Infrastructure Ready for Higher GPU Densities?

1. Why Have AI Cooling Challenges Evolved from "Cooling" to "Compute Constraint"?

For decades, IT professionals viewed data center cooling as an operational overhead—a necessary utility to keep standard servers running within acceptable safety margins. In the era of AI, this paradigm is dangerously obsolete.

AI cooling challenges have evolved into absolute compute constraints because modern GPUs automatically throttle their clock speeds the moment they hit thermal limits, directly reducing the arithmetic logic output and destroying the ROI of the cluster.

When dealing with traditional enterprise workloads (like web hosting or database management), server utilization rarely sustains 100% across an entire rack for extended periods. AI workloads, however, operate entirely differently. Large Language Model (LLM) training requires clusters of GPUs to run at maximum capacity for weeks or even months continuously.

When a high-power accelerator reaches its thermal junction limit (often around 85°C to 90°C depending on the silicon), the hardware's internal firmware intervenes. To prevent permanent physical damage to the die, the GPU drastically cuts its power draw and operating frequency. This is known as thermal throttling. If your facility lacks adequate data center thermal management for AI, you might pay for 100% of a GPU's processing power but only yield 70% of its actual performance. Consequently, the core objective of modern thermal engineering is no longer simply "heat removal," but rather "performance preservation."

2. How Do Uneven Heat Distributions Impact AI Server Stability?

Traditional data centers were built around the concept of uniform heat dissipation. Aisles were flooded with cold air under the assumption that every 1U server in a rack generated roughly the same amount of heat. AI architectures completely shatter this assumption.

Uneven heat distributions severely impact AI server stability by creating extreme localized hotspots. Because AI systems concentrate massive thermal output onto incredibly small silicon dies, traditional uniform cooling fails to penetrate these critical zones, leading to unpredictable system crashes and hardware fatigue.

High-density computing cooling must address the reality of modern motherboard layouts. An AI server is not a homogeneous box; it contains a dense array of specialized components. You have ultra-high-wattage GPUs grouped tightly together alongside HBM (High Bandwidth Memory), NVSwitches, and massive power delivery networks. The heat is not distributed evenly. A single GPU package might output 1000W+ in a footprint of just a few square inches, creating a violent thermal spike, while adjacent storage drives remain relatively cool.

Furthermore, AI models process data in bursts. This causes severe thermal cycling—the hardware rapidly heats up during a processing surge and cools slightly during data transfer phases. This relentless expansion and contraction of dissimilar materials (silicon, copper, solder) causes mechanical fatigue over time. If the thermal management solution cannot instantly react to these severe, concentrated heat spikes, the resulting localized overheating causes frequent micro-stutters, data transmission errors, and ultimately, premature component failure.

3. Why is Traditional Air Cooling Reaching its Efficiency Limit in 2026?

The physics of moving air are rigid. Air has a low specific heat capacity, meaning it cannot absorb much thermal energy before it must be exhausted and replaced. In the face of exponential power scaling, forcing air through server racks is becoming an exercise in futility.

Traditional air cooling is reaching its efficiency limit because AI compute environments have pushed rack densities from a manageable 5–10 kW to an extreme 20–50 kW per rack. Air lacks the thermal mass to absorb this density, causing efficiency to plummet and power costs to skyrocket.

Data reveals a stark structural shift in data center economics. Historically, a standard enterprise rack consumed 5 to 10 kW of power. Today, a single rack housing advanced AI accelerators effortlessly consumes 20 to 50 kW, with some specialized supercomputing clusters pushing even higher. To cool a 40kW rack with air, facility managers must install massive, high-RPM fans that sound like jet engines and require massive amounts of electricity just to spin.

Cooling systems currently account for 20% to 40% of a data center's total energy consumption. As operators attempt to push more cold air into high-density racks, the marginal return on efficiency drops drastically. The energy required to move the air begins to rival the energy required to run the servers. This severely inflates the facility's Power Usage Effectiveness (PUE). Liquid, on the other hand, is significantly more efficient at capturing and transporting heat.

Metric | Traditional Air Cooling (Pre-AI Era) | Modern AI Thermal Management (2026) |

Typical Rack Power Density | $5$ kW to $10$ kW | $20$ kW to $50$ kW+ |

Cooling Medium | Chilled ambient air | Direct-to-chip liquid (Water/Dielectric) |

Heat Target Focus | Entire room / Uniform chassis cooling | Localized, silicon-level precision cooling |

Heat Exchange Efficiency | Baseline | $30\%$ to $50\%$ higher than air |

Impact on Compute Output | Often causes thermal throttling under heavy load | Maintains stable junction temps for max output |

PUE Impact | High (Massive fan and chiller power required) | Significantly lowers overall facility energy usage |

Air Cooling for CPU of IT Server

Liquid Cooling for IT/AI Server

4. How Do Liquid Cooling Systems for AI Servers Recover Stranded Compute Power?

When high-density computing clusters fail to meet their theoretical benchmarks, the culprit is almost always thermal throttling. Transitioning to advanced cooling architectures instantly recovers this lost performance.

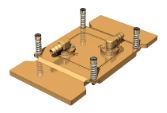

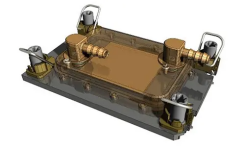

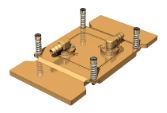

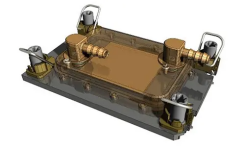

Liquid cooling systems for AI servers recover stranded compute power by utilizing microchannel cold plates to extract heat directly from the silicon. By improving heat exchange efficiency by 30% to 50%, liquid systems eliminate localized hotspots, allowing GPUs to maintain their maximum boost clocks continuously.

Consider a recent case study involving a major AI research institution deploying a large-scale computing cluster. Initially, the facility attempted to utilize a highly optimized traditional air cooling architecture. However, as they began training complex multi-modal models, system loads hit 100%. Within minutes, the telemetry data revealed severe issues: localized areas on the GPUs were hitting their thermal limits, the compute tasks frequently triggered thermal protection protocols (downclocking), and the overall arithmetic output of the cluster fell significantly below the theoretical design values. They were paying for peak performance but receiving mid-tier output.

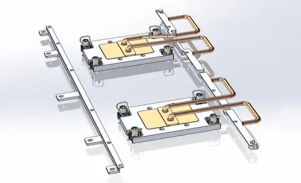

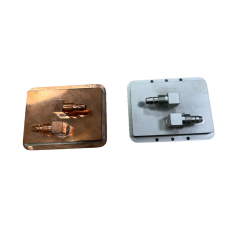

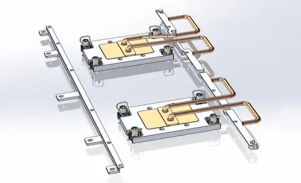

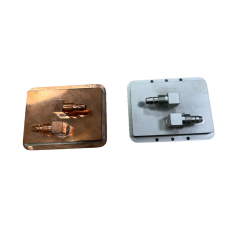

The facility initiated a retrofit, upgrading the racks to utilize liquid cooling systems for AI servers. Specifically, they deployed precision vacuum-brazed cold plates mounted directly to the GPUs and CPUs. The transformation was profound. Because liquid is exponentially denser than air, the cold plates absorbed the violent heat spikes instantly. The thermal distribution across the motherboard became uniform. The most critical outcome, however, was compute recovery: the equipment stability improved drastically, and the calculation release rate returned to near 100% of the theoretical maximum. The liquid cooling system did not just "lower the temperature"—it rescued millions of dollars in stranded computational performance.

5. What Makes a Data Center Thermal Management System Truly "AI-Ready"?

Purchasing a liquid cold plate and attaching it to a GPU does not solve the macro-level challenges of a data center. An effective deployment requires a holistic engineering approach that views the entire facility as an integrated thermal loop.

A truly AI-ready thermal solution moves beyond single components, requiring deep system-level architecture capabilities, advanced thermal-fluid simulation to match dynamic workloads, and automated control systems that dynamically adjust coolant flow to changing hardware demands.

As the competitive landscape intensifies, AI-ready thermal solutions are defined by their system engineering and integration capabilities. A vendor capable of supporting a 2026 AI deployment must possess:

1. System Architecture Design: The ability to engineer the entire fluid path, from the microchannels inside the cold plate (to maximize surface area) to the Coolant Distribution Units (CDUs) and secondary facility loops.

2. Thermal Simulation & Load Matching: AI heat loads are not static. Expert engineers utilize advanced computational fluid dynamics (CFD) software to simulate how the system will react under massive, bursty workloads, ensuring the manifold designs do not create pressure bottlenecks.

3. Dynamic Control & Automation: Integrating smart sensors that communicate with the server management systems. If a specific node begins a heavy compute task, the cooling system must dynamically increase fluid flow to that specific zone before the silicon overheats.

At Winshare Thermal Technology, we understand that isolated components cannot secure a data center. We focus on integrating our advanced brazed liquid cold plates into cohesive, system-level solutions that adapt seamlessly to the aggressive, continuous demands of modern AI server architectures.

6. Is Your Infrastructure Ready for Higher GPU Densities?

The transition to high-density liquid cooling is not merely an infrastructure upgrade; it is a strategic business mandate. Facilities that fail to adapt their thermal architecture will simply be unable to house the next generation of AI silicon.

To determine if your infrastructure is ready for higher GPU densities, evaluate if your current system is already triggering performance throttling, if your cooling energy costs are spiraling, and if your facility possesses the fluid integration capabilities required for future expansion.

Decision-makers must conduct a rigorous self-assessment. Ask your engineering teams:

● Can our current racks support the addition of next-generation 1000W+ GPUs without causing adjacent servers to overheat?

● Are we currently experiencing "silent" performance losses due to micro-throttling during peak training hours?

● Does our thermal roadmap include the integration of in-rack CDUs and direct-to-chip liquid cooling to manage the inevitable 50kW+ rack loads?

If the answer to any of these questions reveals a vulnerability, your current thermal management strategy is acting as a hard limit on your company's AI capabilities. Do not allow inadequate cooling to bottleneck your computational growth. Consulting with an experienced thermal engineering partner to audit your current infrastructure and design a scalable, liquid-based thermal loop is the most critical step toward securing your facility's operational future.

Conclusion

The AI thermal management challenges of 2026 are complex, heavily localized, and directly tied to the financial viability of computational clusters. Traditional air cooling has exhausted its physical capabilities in the face of 50kW rack densities. By recognizing that thermal constraints are actually compute constraints, and by embracing system-level, AI-ready liquid cooling solutions, data centers can eliminate performance throttling, drastically lower their PUE, and unlock the full, continuous power of their AI hardware investments.

Frequently Asked Questions (FAQ)

1. Why is AI thermal management different from traditional data center cooling?

Traditional cooling focuses on blowing cold air uniformly across a room to cool low-density 5-10kW racks. AI thermal management must target extreme, localized heat fluxes on specific silicon dies within high-density 20-50kW+ racks, usually requiring liquid cooling to prevent hardware throttling.

2. What happens to an AI server if the thermal management fails?

Before the hardware physically melts, the GPUs and CPUs will trigger thermal protection protocols. They will automatically "throttle" or lower their operating frequency to generate less heat, which directly reduces the server's computational speed and wastes your hardware investment.

3. At what rack density is liquid cooling considered mandatory?

While highly optimized air containment can sometimes stretch to 20kW, the industry consensus is that rack densities exceeding 20kW to 25kW require direct-to-chip liquid cooling systems to maintain stable, efficient, and cost-effective operation.

4. How does liquid cooling improve a data center's PUE?

Cooling systems typically account for 20-40% of a data center's energy use. Liquid is roughly 3,000 times more efficient at carrying heat than air. By replacing massive, high-wattage air conditioning fans with highly efficient liquid pumps, a facility drastically reduces its total energy consumption, improving its Power Usage Effectiveness (PUE).

5. What is an "AI-ready" thermal solution?

It is a comprehensive, system-level cooling architecture designed specifically for the bursty, high-load nature of AI. It includes advanced microchannel cold plates, thermal fluid simulation to prevent pressure drops, and smart CDUs that dynamically adjust cooling based on real-time server workloads.

6. Can existing air-cooled data centers be upgraded to liquid cooling?

Yes. Many modern liquid cooling systems utilize a liquid-to-air or liquid-to-facility-water architecture. In-rack or row-based CDUs can be retrofitted into existing aisles to bring direct-to-chip liquid cooling to specific high-density AI clusters without completely rebuilding the data center.

In the rapidly evolving landscape of artificial intelligence, managing the heat generated by massive GPU clusters has fundamentally shifted from a secondary facility concern to a primary system-level constraint. The top AI thermal management challenges in 2026 center around managing extreme localized heat fluxes in 20kW to 50kW+ racks, where failure to extract heat fast enough directly results in severe compute throttling and massive financial losses. As machine learning models grow exponentially in complexity, the hardware tasked with training and running inference on these models is being pushed to its absolute physical limits. The days of simply lowering the ambient temperature of a server room are over. Today, engineers and procurement managers must understand that AI thermal management solutions are no longer just about keeping equipment from melting; they are about unlocking the full computational potential of billion-dollar hardware investments. Let us explore the structural changes in high-density computing cooling and how data centers must adapt to survive the thermal demands of 2026.

Table of Contents

Why Have AI Cooling Challenges Evolved from "Cooling" to "Compute Constraint"?

How Do Uneven Heat Distributions Impact AI Server Stability?

Why is Traditional Air Cooling Reaching its Efficiency Limit in 2026?

How Do Liquid Cooling Systems for AI Servers Recover Stranded Compute Power?

What Makes a Data Center Thermal Management System Truly "AI-Ready"?

Is Your Infrastructure Ready for Higher GPU Densities?

1. Why Have AI Cooling Challenges Evolved from "Cooling" to "Compute Constraint"?

For decades, IT professionals viewed data center cooling as an operational overhead—a necessary utility to keep standard servers running within acceptable safety margins. In the era of AI, this paradigm is dangerously obsolete.

AI cooling challenges have evolved into absolute compute constraints because modern GPUs automatically throttle their clock speeds the moment they hit thermal limits, directly reducing the arithmetic logic output and destroying the ROI of the cluster.

When dealing with traditional enterprise workloads (like web hosting or database management), server utilization rarely sustains 100% across an entire rack for extended periods. AI workloads, however, operate entirely differently. Large Language Model (LLM) training requires clusters of GPUs to run at maximum capacity for weeks or even months continuously.

When a high-power accelerator reaches its thermal junction limit (often around 85°C to 90°C depending on the silicon), the hardware's internal firmware intervenes. To prevent permanent physical damage to the die, the GPU drastically cuts its power draw and operating frequency. This is known as thermal throttling. If your facility lacks adequate data center thermal management for AI, you might pay for 100% of a GPU's processing power but only yield 70% of its actual performance. Consequently, the core objective of modern thermal engineering is no longer simply "heat removal," but rather "performance preservation."

2. How Do Uneven Heat Distributions Impact AI Server Stability?

Traditional data centers were built around the concept of uniform heat dissipation. Aisles were flooded with cold air under the assumption that every 1U server in a rack generated roughly the same amount of heat. AI architectures completely shatter this assumption.

Uneven heat distributions severely impact AI server stability by creating extreme localized hotspots. Because AI systems concentrate massive thermal output onto incredibly small silicon dies, traditional uniform cooling fails to penetrate these critical zones, leading to unpredictable system crashes and hardware fatigue.

High-density computing cooling must address the reality of modern motherboard layouts. An AI server is not a homogeneous box; it contains a dense array of specialized components. You have ultra-high-wattage GPUs grouped tightly together alongside HBM (High Bandwidth Memory), NVSwitches, and massive power delivery networks. The heat is not distributed evenly. A single GPU package might output 1000W+ in a footprint of just a few square inches, creating a violent thermal spike, while adjacent storage drives remain relatively cool.

Furthermore, AI models process data in bursts. This causes severe thermal cycling—the hardware rapidly heats up during a processing surge and cools slightly during data transfer phases. This relentless expansion and contraction of dissimilar materials (silicon, copper, solder) causes mechanical fatigue over time. If the thermal management solution cannot instantly react to these severe, concentrated heat spikes, the resulting localized overheating causes frequent micro-stutters, data transmission errors, and ultimately, premature component failure.

3. Why is Traditional Air Cooling Reaching its Efficiency Limit in 2026?

The physics of moving air are rigid. Air has a low specific heat capacity, meaning it cannot absorb much thermal energy before it must be exhausted and replaced. In the face of exponential power scaling, forcing air through server racks is becoming an exercise in futility.

Traditional air cooling is reaching its efficiency limit because AI compute environments have pushed rack densities from a manageable 5–10 kW to an extreme 20–50 kW per rack. Air lacks the thermal mass to absorb this density, causing efficiency to plummet and power costs to skyrocket.

Data reveals a stark structural shift in data center economics. Historically, a standard enterprise rack consumed 5 to 10 kW of power. Today, a single rack housing advanced AI accelerators effortlessly consumes 20 to 50 kW, with some specialized supercomputing clusters pushing even higher. To cool a 40kW rack with air, facility managers must install massive, high-RPM fans that sound like jet engines and require massive amounts of electricity just to spin.

Cooling systems currently account for 20% to 40% of a data center's total energy consumption. As operators attempt to push more cold air into high-density racks, the marginal return on efficiency drops drastically. The energy required to move the air begins to rival the energy required to run the servers. This severely inflates the facility's Power Usage Effectiveness (PUE). Liquid, on the other hand, is significantly more efficient at capturing and transporting heat.

Metric | Traditional Air Cooling (Pre-AI Era) | Modern AI Thermal Management (2026) |

Typical Rack Power Density | $5$ kW to $10$ kW | $20$ kW to $50$ kW+ |

Cooling Medium | Chilled ambient air | Direct-to-chip liquid (Water/Dielectric) |

Heat Target Focus | Entire room / Uniform chassis cooling | Localized, silicon-level precision cooling |

Heat Exchange Efficiency | Baseline | $30\%$ to $50\%$ higher than air |

Impact on Compute Output | Often causes thermal throttling under heavy load | Maintains stable junction temps for max output |

PUE Impact | High (Massive fan and chiller power required) | Significantly lowers overall facility energy usage |

4. How Do Liquid Cooling Systems for AI Servers Recover Stranded Compute Power?

When high-density computing clusters fail to meet their theoretical benchmarks, the culprit is almost always thermal throttling. Transitioning to advanced cooling architectures instantly recovers this lost performance.

Liquid cooling systems for AI servers recover stranded compute power by utilizing microchannel cold plates to extract heat directly from the silicon. By improving heat exchange efficiency by 30% to 50%, liquid systems eliminate localized hotspots, allowing GPUs to maintain their maximum boost clocks continuously.

Consider a recent case study involving a major AI research institution deploying a large-scale computing cluster. Initially, the facility attempted to utilize a highly optimized traditional air cooling architecture. However, as they began training complex multi-modal models, system loads hit 100%. Within minutes, the telemetry data revealed severe issues: localized areas on the GPUs were hitting their thermal limits, the compute tasks frequently triggered thermal protection protocols (downclocking), and the overall arithmetic output of the cluster fell significantly below the theoretical design values. They were paying for peak performance but receiving mid-tier output.

The facility initiated a retrofit, upgrading the racks to utilize liquid cooling systems for AI servers. Specifically, they deployed precision vacuum-brazed cold plates mounted directly to the GPUs and CPUs. The transformation was profound. Because liquid is exponentially denser than air, the cold plates absorbed the violent heat spikes instantly. The thermal distribution across the motherboard became uniform. The most critical outcome, however, was compute recovery: the equipment stability improved drastically, and the calculation release rate returned to near 100% of the theoretical maximum. The liquid cooling system did not just "lower the temperature"—it rescued millions of dollars in stranded computational performance.

5. What Makes a Data Center Thermal Management System Truly "AI-Ready"?

Purchasing a liquid cold plate and attaching it to a GPU does not solve the macro-level challenges of a data center. An effective deployment requires a holistic engineering approach that views the entire facility as an integrated thermal loop.

A truly AI-ready thermal solution moves beyond single components, requiring deep system-level architecture capabilities, advanced thermal-fluid simulation to match dynamic workloads, and automated control systems that dynamically adjust coolant flow to changing hardware demands.

As the competitive landscape intensifies, AI-ready thermal solutions are defined by their system engineering and integration capabilities. A vendor capable of supporting a 2026 AI deployment must possess:

System Architecture Design: The ability to engineer the entire fluid path, from the microchannels inside the cold plate (to maximize surface area) to the Coolant Distribution Units (CDUs) and secondary facility loops.

Thermal Simulation & Load Matching: AI heat loads are not static. Expert engineers utilize advanced computational fluid dynamics (CFD) software to simulate how the system will react under massive, bursty workloads, ensuring the manifold designs do not create pressure bottlenecks.

Dynamic Control & Automation: Integrating smart sensors that communicate with the server management systems. If a specific node begins a heavy compute task, the cooling system must dynamically increase fluid flow to that specific zone before the silicon overheats.

At Winshare Thermal Technology, we understand that isolated components cannot secure a data center. We focus on integrating our advanced brazed liquid cold plates into cohesive, system-level solutions that adapt seamlessly to the aggressive, continuous demands of modern AI server architectures.

6. Is Your Infrastructure Ready for Higher GPU Densities?

The transition to high-density liquid cooling is not merely an infrastructure upgrade; it is a strategic business mandate. Facilities that fail to adapt their thermal architecture will simply be unable to house the next generation of AI silicon.

To determine if your infrastructure is ready for higher GPU densities, evaluate if your current system is already triggering performance throttling, if your cooling energy costs are spiraling, and if your facility possesses the fluid integration capabilities required for future expansion.

Decision-makers must conduct a rigorous self-assessment. Ask your engineering teams:

Can our current racks support the addition of next-generation 1000W+ GPUs without causing adjacent servers to overheat?

Are we currently experiencing "silent" performance losses due to micro-throttling during peak training hours?

Does our thermal roadmap include the integration of in-rack CDUs and direct-to-chip liquid cooling to manage the inevitable 50kW+ rack loads?

If the answer to any of these questions reveals a vulnerability, your current thermal management strategy is acting as a hard limit on your company's AI capabilities. Do not allow inadequate cooling to bottleneck your computational growth. Consulting with an experienced thermal engineering partner to audit your current infrastructure and design a scalable, liquid-based thermal loop is the most critical step toward securing your facility's operational future.

Conclusion

The AI thermal management challenges of 2026 are complex, heavily localized, and directly tied to the financial viability of computational clusters. Traditional air cooling has exhausted its physical capabilities in the face of 50kW rack densities. By recognizing that thermal constraints are actually compute constraints, and by embracing system-level, AI-ready liquid cooling solutions, data centers can eliminate performance throttling, drastically lower their PUE, and unlock the full, continuous power of their AI hardware investments.

Frequently Asked Questions (FAQ)

1. Why is AI thermal management different from traditional data center cooling?

Traditional cooling focuses on blowing cold air uniformly across a room to cool low-density 5-10kW racks. AI thermal management must target extreme, localized heat fluxes on specific silicon dies within high-density 20-50kW+ racks, usually requiring liquid cooling to prevent hardware throttling.

2. What happens to an AI server if the thermal management fails?

Before the hardware physically melts, the GPUs and CPUs will trigger thermal protection protocols. They will automatically "throttle" or lower their operating frequency to generate less heat, which directly reduces the server's computational speed and wastes your hardware investment.

3. At what rack density is liquid cooling considered mandatory?

While highly optimized air containment can sometimes stretch to 20kW, the industry consensus is that rack densities exceeding 20kW to 25kW require direct-to-chip liquid cooling systems to maintain stable, efficient, and cost-effective operation.

4. How does liquid cooling improve a data center's PUE?

Cooling systems typically account for 20-40% of a data center's energy use. Liquid is roughly 3,000 times more efficient at carrying heat than air. By replacing massive, high-wattage air conditioning fans with highly efficient liquid pumps, a facility drastically reduces its total energy consumption, improving its Power Usage Effectiveness (PUE).

5. What is an "AI-ready" thermal solution?

It is a comprehensive, system-level cooling architecture designed specifically for the bursty, high-load nature of AI. It includes advanced microchannel cold plates, thermal fluid simulation to prevent pressure drops, and smart CDUs that dynamically adjust cooling based on real-time server workloads.

6. Can existing air-cooled data centers be upgraded to liquid cooling?

Yes. Many modern liquid cooling systems utilize a liquid-to-air or liquid-to-facility-water architecture. In-rack or row-based CDUs can be retrofitted into existing aisles to bring direct-to-chip liquid cooling to specific high-density AI clusters without completely rebuilding the data center.

English

English